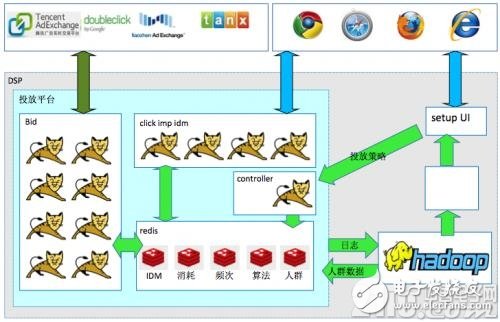

As shown in Figure 7-22, the DSP system involves technical architecture: delivery platform, launching setup UI, report, algorithm engine and so on. The algorithm engine module is mainly a large number of large data and algorithmic machine learning using distributed technology (such as hadoop), modeling user logs, crowd data, and machine intelligent processing. The data processed by the algorithm engine module, such as crowd data and algorithm models, are temporarily stored in memory through mass memory technology (such as redis), which facilitates Bid to quickly query and use the engine. The purpose of all temporary storage in memory is to complete the bidding process in 100 ms. Ensure that the 30ms processing is completed on the DSP side, and the outflow time is for network communication. The Bid delivery engine is typically a large cluster mode that is used to respond to large concurrent requests, and ensures that each request is processed 30ms. Bid's delivery engine's delivery rules (budget, frequency, delivery policy settings, etc.) are also stored in memory for quick query. The data content of the delivery policy setting is all completed by the user through the interface of setting up the user interaction module. There are also some very important auxiliary modules, such as: advertising exposure click data recovery module, idmapping module, big data report module, built-in DMP module and so on.

Figure 7-22 Technical architecture overview

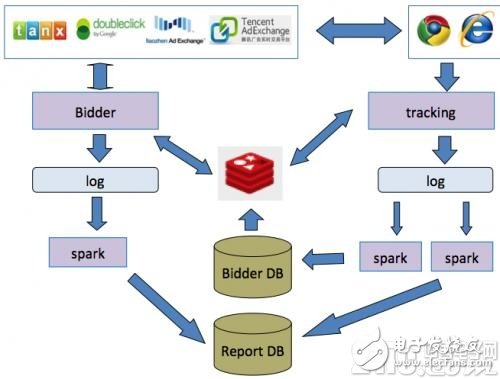

2. Overview of DSP internal technical processDSP internal technical processing mainly depends on some key technical processing facilities. The main ones include: original massive log system, massive message parallel processing queue (for example, using spark technology), massive memory system (for example, using redis technology), business system relational data database, etc. Wait. As shown in Figure 7-23, a technical processing line is an ad request processing line: advertising bid Bidder massive real-time ad request processing will generate a large number of original log, while Bidder also frequently interacts with massive memory systems to read and write advertisement requests. Frequency, consumption, and other data, and then the ad request log through the parallel processing queue processing into the reporting database and the corresponding big data crowd and model database. Another technical processing line is the recovery of advertisement data such as exposure, clicks, and so on. It also starts with generating a large number of original logs. At the same time, the data recovery engine interacts with mass memory systems to write data such as advertisement exposure, click-related frequency, consumption, and so on. Then the advertisement exposure and click log are processed into the corresponding report database and the corresponding big data crowd and model database through the parallel processing queue processing. At the same time, the queues are processed in parallel to perform a large number of machine intelligence analysis to update part of the crowd data and model data, and simultaneously update to Bidder. The database and content system are used when Bidder bids in real time.

Figure 7-23 Overview of DSP internal technical process

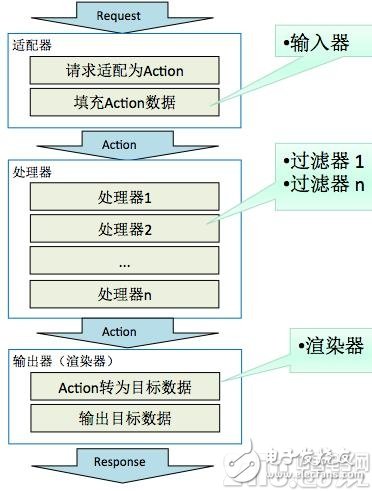

3. Outline of the DSP core processing flow ("30ms")As shown in Figure 7-24, the DSP Bidder's bidding module design constraints core processing time is very short, "30ms. To solve different interfaces adapting different ADX traffic. When an ad request is accepted and the output is returned, different adapters are used to handle adapter design patterns for different ADX platform interfaces. However, the overall process flow remains unchanged. The intermediate business processing part also uses the filter design mode, and adding a filter can be implemented according to business needs when adding new services. The advantage of this is that the overall Bidder bidding core module processing flow framework is relatively stable and will not change with this business. With very strong business agility and the ability to cope with high levels of horizontal scalability.

Figure 7-24 Example of the core DSP processing flow

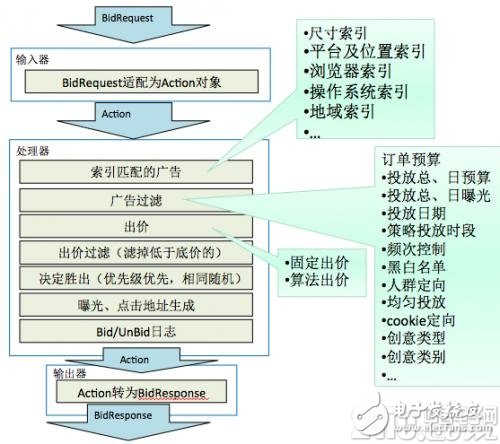

4. Outline of the bidding processAs shown in Figure 7-25, the Bidder auction processor is also divided into business processes according to the following: the indexer quickly filters advertisements. (The advantage of using an indexer is that search efficiency is very high. Of course, the indexer can only filter the user's simple conditions. For example: size index, platform and ad slot index, browser index, operating system index, region index, etc.). Ad filtering (The delivery policy related rules need to calculate the filter conditions, such as: budget, exposure, date, frequency, crowd orientation, creative type, etc.). Both of the above two layers of filtering are for advertising requests to filter the list of candidate advertisements available for delivery, and then bids for each advertisement in the advertisement list by processing of the bidding algorithm (the dynamic bidding algorithm may be used here, and the fixed bidding may also be used. The bid strategy (which bid strategy to use and whether or not to use the algorithm is set manually in the delivery settings screen)). Then cheap filtering (filtering out those candidate ads that bid below the reserve price based on the reserve price in the ad request) is performed. Finally, sort and decide the winner (according to the bid of each candidate ad and the priority weight given by the algorithm, the winner is ranked first and will be returned with the ad content ready for bidding). Exposure clicks dynamic code generation (creative clickout dynamic code generated by the above advertising content to generate dynamic exposure click code has many purposes, such as anti-cheat, carry delivery parameter tracking, etc.). Bid/Unbid log records (asynchronous start when processing ends).

Figure 7-25 Sample process of bidding process

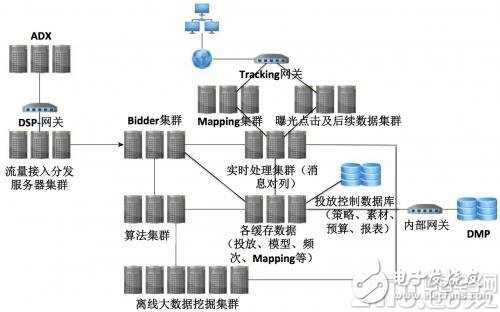

5. Distributed Cluster OverviewAs shown in Figure 7-26, in order to meet the needs of a large number of ad auction business needs, and the needs of big data distributed computing infrastructure. DSP needs to support distributed capacity expansion in system architecture design. The architecture supports features such as large concurrency, big data, high availability, and high fault tolerance.

Figure 7-26 Distributed Cluster Overview Example

3 In 1 Usb Hub,Usb C Hub Vga,Type C Hub 3 In 1,USB HUBs with HDMI,docking station USB C.

Shenzhen Konchang Electronic Technology Co.,Ltd , https://www.konchangs.com