The powerful functions of neural networks are obvious to all, but they often require a large amount of training data similar to the data distribution of the target test domain. Inductive logic programming for the symbol field requires only a small amount of data, but it cannot fight noise, and the applicable field is also narrow.

In a recently published paper, DeepMind proposed a differential inductive logic programming method, ILP, which not only solves the symbolic tasks that traditional inductive logic programming excels at, but also has some tolerance for noise data and training concentration errors. Train by gradient descent.

how about it? Let's take a look at DeepMind's interpretation of this method on the official blog:

Imagine playing football, the ball is at your feet, and you decide to pass it to the striker who is not staring. This seemingly simple behavior requires two different mindsets.

First of all, you realize that you have a ball under your feet. This requires intuitive and emotional thinking – you can't simply describe how you know there is a ball under your feet.

Second, you decide to pass the ball to a particular striker. This decision requires conceptual thinking, and your decision depends on the reason - the reason you pass the ball to the striker is that no one is staring at her.

This distinction is very interesting to us because these two types of thinking correspond to two different machine learning methods: deep learning and symbolic synthesis.

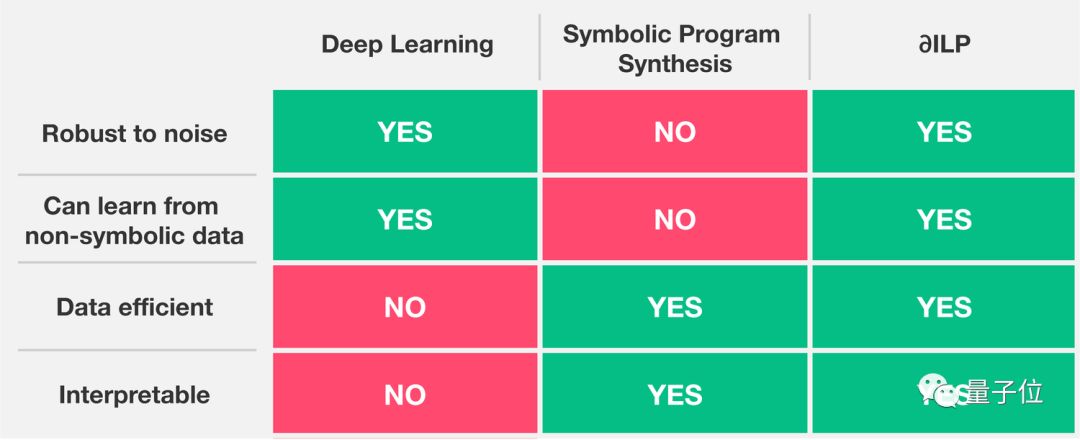

Deep learning focuses on intuitive perceptual thinking, while symbolic program synthesis focuses on conceptual, rule-based thinking. These two systems have their own advantages. The deep learning system can be applied to noise data, but it is difficult to explain, and it requires a lot of training data. The symbol system is easier to explain and requires less training data, but when it encounters noise data. No.

Human cognition seamlessly combines these two distinct ways of thinking, but if we want to replicate this combination into an AI system, we don't know if it is possible or how.

Our recent paper in the Journal of AI Research shows that systems can combine intuitive perceptual thinking with conceptually interpretable reasoning. The ∂ILP (Differentiable Inductive Logic Programming) system we describe has the following characteristics: noise immunity, economical data, and interpretable rules.

We use an inductive task to demonstrate how ∂ILP works:

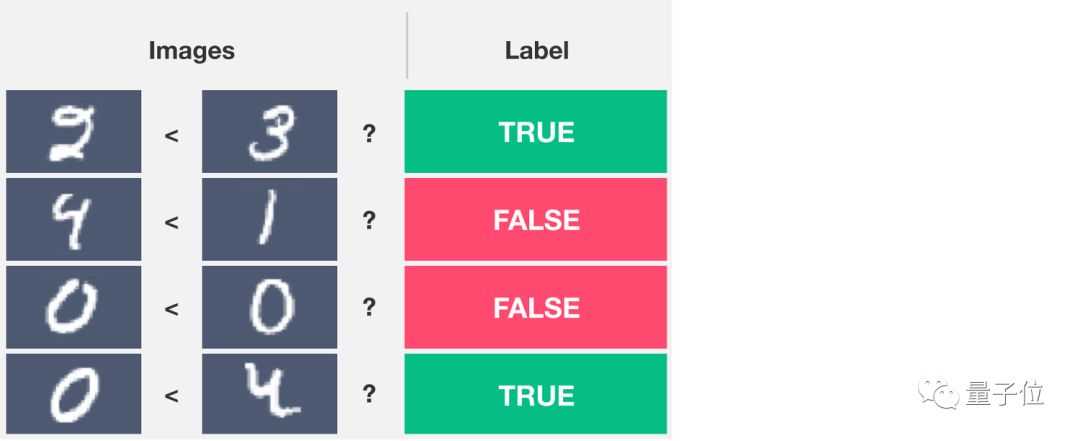

Knowing a pair of pictures that represent numbers, the system needs to output a 0 or 1 label based on whether the left image number is smaller than the number of the right image, as shown in the following figure:

Solving this problem involves two ways of thinking. Recognizing numbers from images requires intuitive perceptual thinking; to understand the "less than" relationship as a whole requires conceptual thinking.

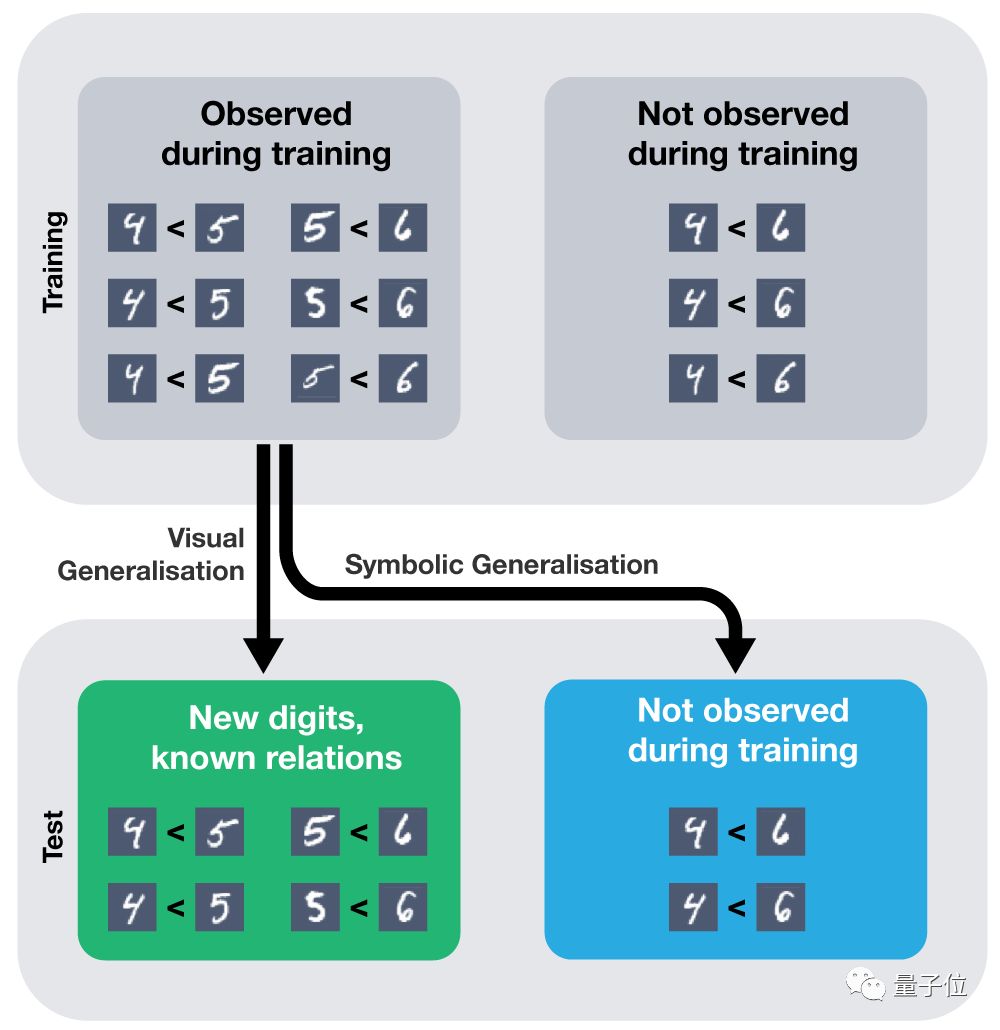

In fact, if a standard deep learning model (such as a convolutional neural network with MLP) is provided with sufficient training data, it can learn to solve this problem effectively. After the training is completed, give it a new image that has never been seen before. It can also be classified correctly.

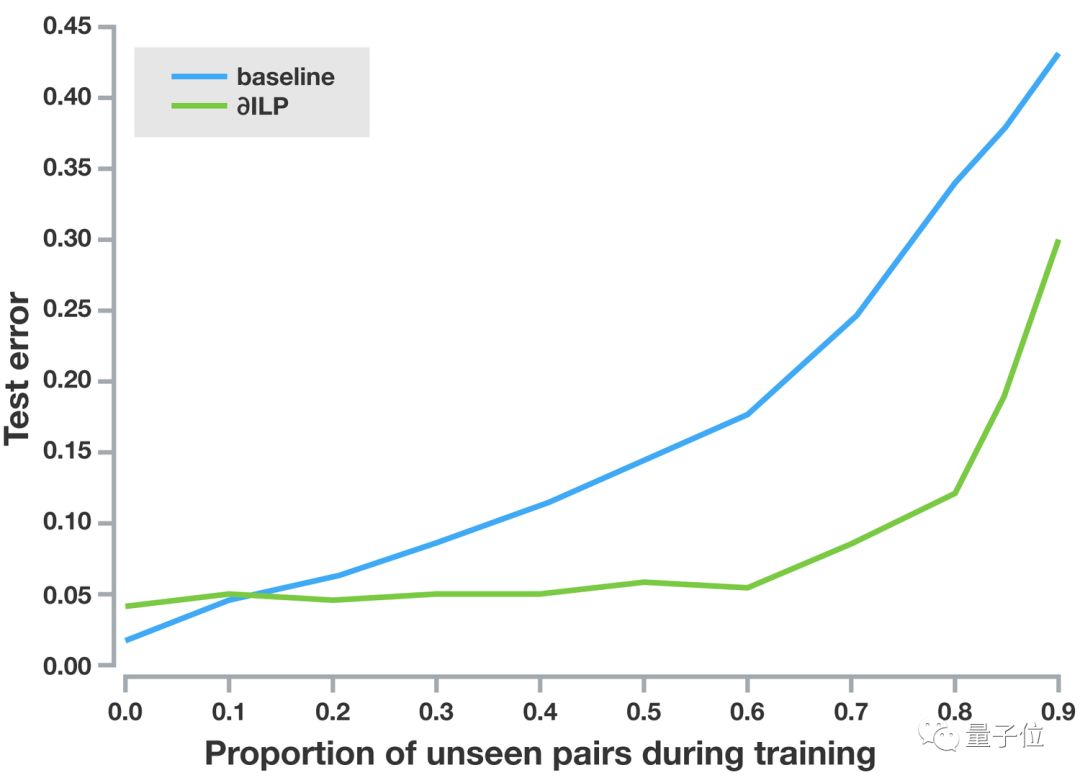

But in fact, you can give it multiple examples only for each pair of numbers, and it can be properly generalized. This model excels at visual generalization. For example, every pair of numbers in the test set has been seen. It is easy to generalize to a new image (see the green square below). But it does not apply to the generalization of symbols, for example, it cannot be generalized to numbers that have never been seen (see the blue box below).

Researchers such as Gary Marcus and Joel Grus have recently written this out.

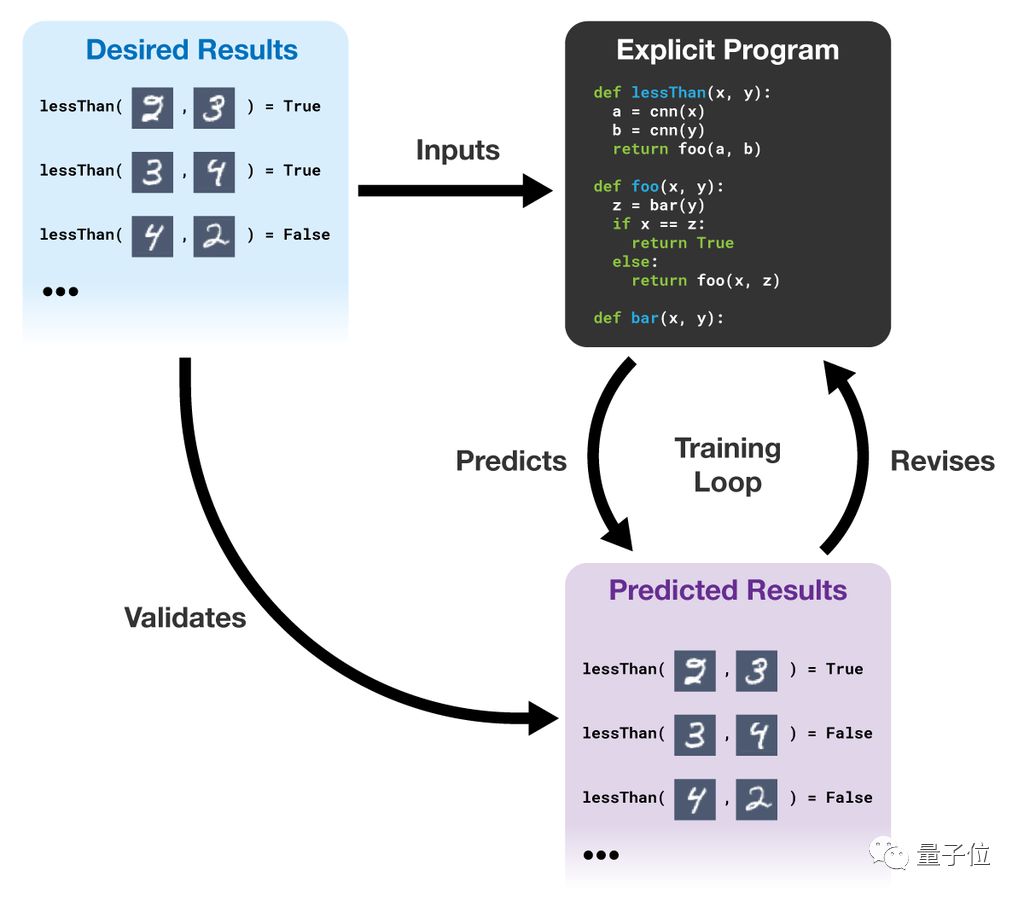

Unlike standard neural networks, ∂ILP is capable of generalizing symbols; it is not the same as standard symbolic programs and can be visually generalized. ∂ILP learns readable, interpretable, verifiable, and definitive procedures from the sample. Knowing some of the examples (that is, the expected results, the desired results in the figure below), ∂ILP can generate a program that meets the requirements. It uses the gradient descent to search from the program space. If the output of the program conflicts with the output required by the reference data, the system will modify the program to better match the data.

The training process of ∂ILP is shown in the following figure:

∂ILP can be symbolically generalized, giving it enough x, y

The above graph summarizes our “less than†experiment: the blue curve represents a standard deep neural network that cannot be properly generalized to a pair of numbers that have never been seen, compared to a situation where only 40% of the pairs have been trained. Under the green curve, the ∂ILP can still maintain a low test error. This shows that ∂ILP can be symbolically generalized.

We believe that our system can give some answers to the question of whether symbolic generalization can be achieved in deep neural networks. In the future, we plan to integrate a system similar to ∂ILP into a reinforcement learning agent and a larger deep learning module to give the system the ability to reason and react.

Universal Back Sticker, Back Film, TPU Back Sticker, Back Skin Sticker, PVC Back Sticker, Back Skin,Custom Phone Sticke,Custom Phone Skin,Phone Back Sticker

Shenzhen Jianjiantong Technology Co., Ltd. , https://www.jjthydrogelprotector.com