Author: Lydia T. Liu, Sarah Dean, Esther Rolf, Max Simchowitz, Moritz Hardt

Participation: Liu Tianci, Xiaokun

Because machine learning systems are vulnerable to prejudice caused by historical data and lead to discriminatory behavior, it is considered necessary to use some fairness criteria to constrain the behavior of the system in certain application scenarios, and expect it to protect vulnerable groups and bring long-term benefits. Recently, the Berkeley AI Institute published a blog discussing the long-term impact of the static fairness criteria, and found that the results are far from people's expectations. Related papers have been accepted by the ICML 2018 conference.

Machine learning systems trained for the purpose of "minimizing prediction error" usually exhibit discriminatory behavior based on sensitivity characteristics such as race, gender, etc. (sensiTIve characterisTIcs), and historical bias in the data may be one of the reasons. . For a long time, machine learning has been criticized in many application scenarios such as loans, hiring, criminal justice, and advertising. "For historical reasons, it has potentially harmed disadvantaged groups that have been neglected."

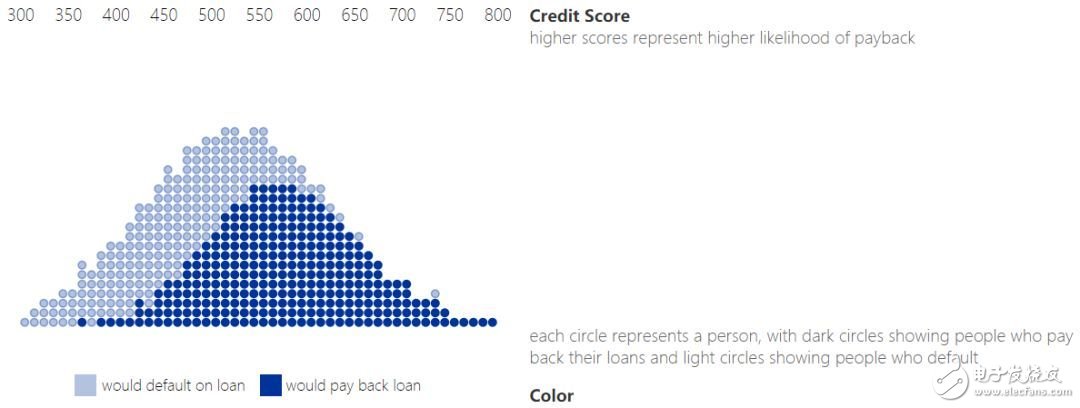

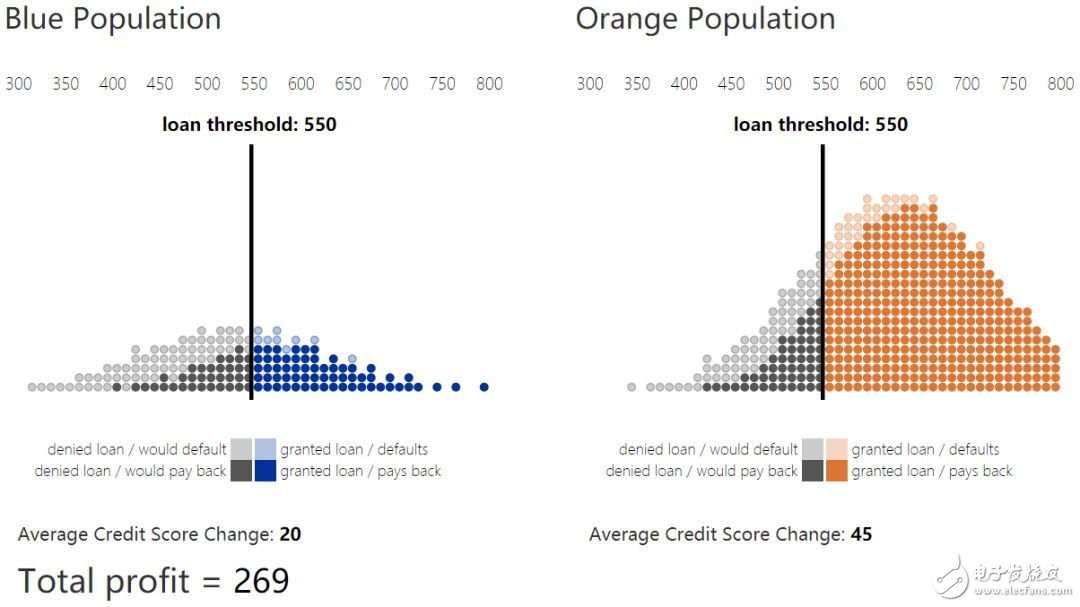

This paper discusses recent results of researchers' decision-making in machine learning that targets long term social welfare. Typically, a machine learning model produces a score that summarizes information about an individual and makes decisions about it. For example, a credit score summarizes someone's credit history and financial behavior to help banks assess their credit rating. We take this loan scenario as an example throughout the text. Any user group has a specific distribution on the credit score, as shown in the following figure.

1. Credit score and repayment distribution

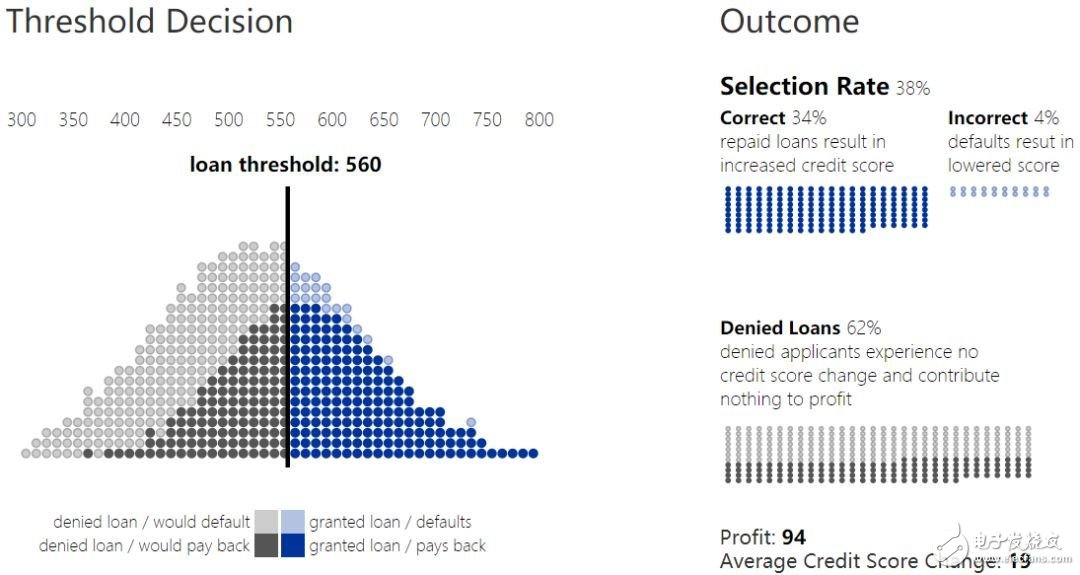

By defining a threshold, you can turn your score into a decision. For example, a person who scores above the lending threshold can get a loan, while a person who is below the lending threshold is rejected. This decision rule is called a threshold policy. The score can be understood as an estimated probability code for the loan default. For example, 90% of people with a credit score of 650 will repay their loans. As a result, banks can estimate their expected return on loans for users with a credit score of 650. Similarly, they can predict the expected return on loans for all users with a credit score above 650 (or any given threshold).

2. Loan thresholds and results

Without considering other factors, banks will try to maximize their total revenue. The return depends on the ratio of the repaid loan amount recovered to the amount of loss in the loan default. In the above chart, the income loss ratio is 1:-4. Because the cost of loss is higher than the income, the bank will conduct more conservative loans and increase the lending threshold. We refer to the overall population ratio above this threshold as the selecTIon rate.

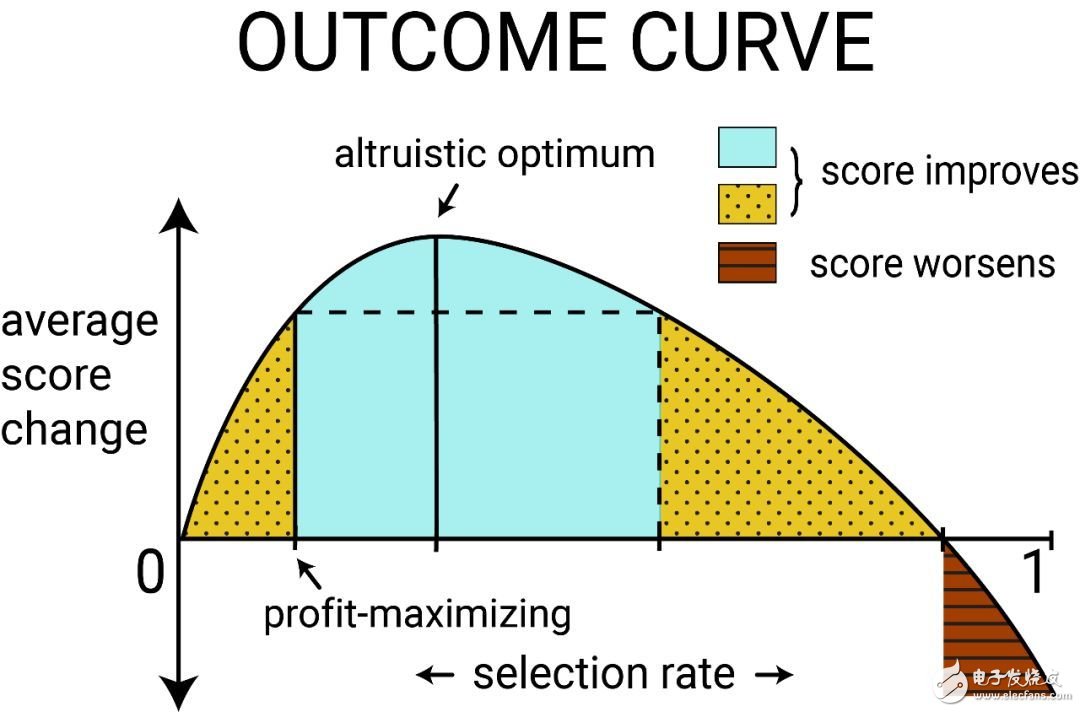

Result curve

Loan decisions affect not only banking institutions, but individuals. In a breach of contract (the lender cannot repay the loan), not only is the bank losing the income, but the credit score of the lender is also reduced. In a successful loan performance, the bank receives revenue and the lender's credit score increases. In this example, a user credit score change ratio is 1 (performance): -2 (default)

In the threshold strategy, the outcome is defined as the change expectation of a certain group score, which can be parameterized as a function of the selection rate, which is called the outcome curve. When the selection rate of a group changes, the result will also change. The results at these overall population levels will depend on both the probability of repayment (derived by the score), the cost, and the return on individual loan decisions.

The figure above shows the result curve for a typical group. When there are enough individuals in the group to get a loan and successfully repay, the average credit score may increase. At this time, if the average score change is positive, the unconstrained benefit maximization result can be obtained. When the deviation is maximized, the average score change will increase to the maximum when loans are provided to more people. Call it altruisTIc optimum. It is also possible to raise the selection rate to a value such that the average score change is lower than the average score change when the unconstrained benefit is maximized, but is still positive, that is, the area represented by the yellow dotted shadow in the figure. Calling the selection rate in this area leads to a relative harm. However, if there are too many users who are unable to repay the loan, the average score will decrease (the average score changes to negative) and enter the shaded area of ​​the red horizontal line.

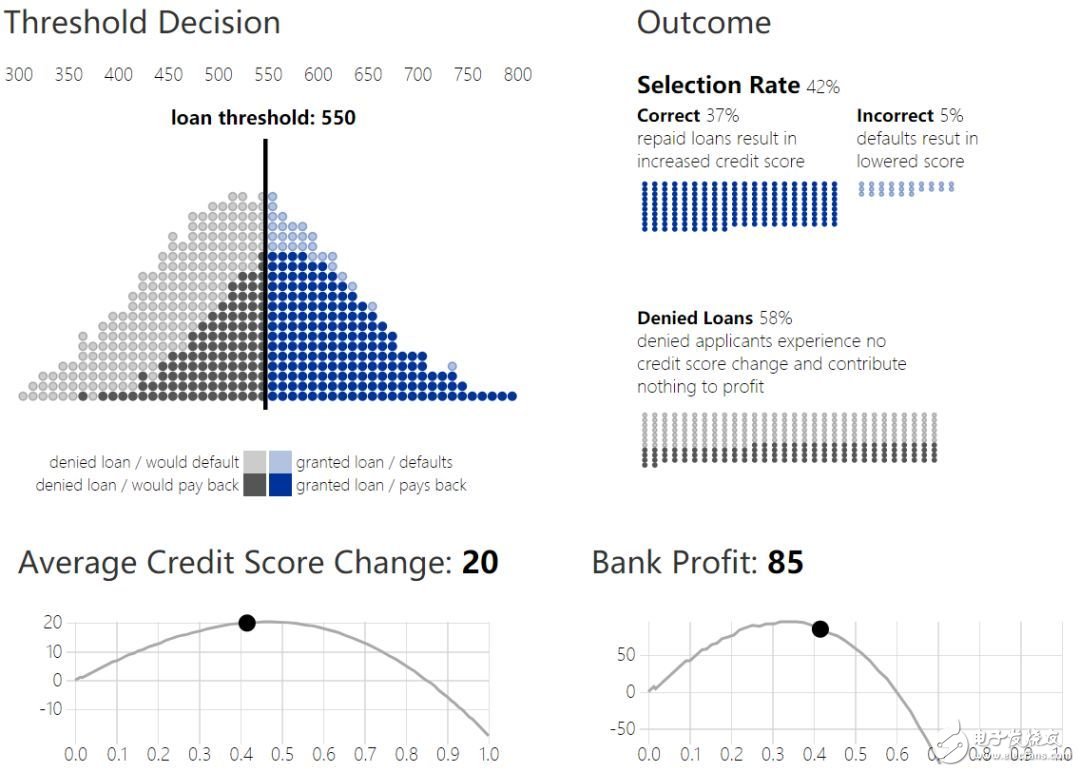

4. Loan threshold and outcome curve

Multi-group situation

How does a given threshold strategy affect individuals in different groups? Two groups with different credit score distributions will have different results.

Suppose that the distribution of credit scores of the second group and the first group is different, and the number of people in the group is also smaller, which is understood as a historically disadvantaged group. Representing it as a blue group, we want to ensure that the bank's loan policy does not unreasonably harm or deceive them.

Assuming that banks can choose different thresholds for each group, although this may face legal challenges, population-based thresholds are unavoidable in order to prevent possible differential results due to fixed threshold decisions.

5. Different groups of loan decisions

It is natural to have a problem: how the threshold selection can be expected to improve in the score distribution of the blue population. As mentioned above, an unconstrained banking strategy maximizes revenue and selects break-even points and loans that are profitable. In fact, the revenue maximization threshold (with a credit score of 580) is the same in both groups.

Fairness criterion

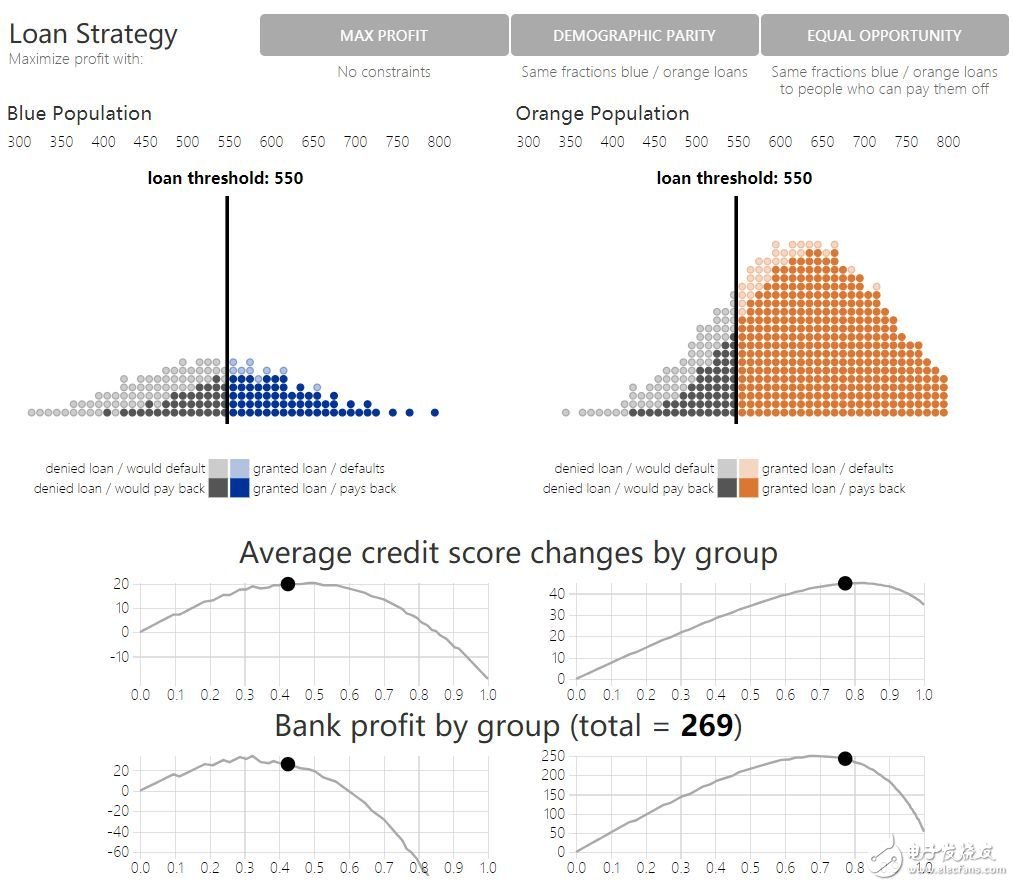

Groups with different score distributions have different shape score curves (the top half of the original figure 6 shows the true credit score data and the result curve of a simple result model). Another alternative to maximizing unconstrained returns is fairness constraints: the decision-making of different groups is equal through certain objective functions. Various fairness criteria have been proposed and resort to intuition to protect vulnerable groups. Through the results model, we can formally answer: whether fairness constraints really encourage more positive results.

A common fairness criterion, demographic parity, requires banks to give the same proportion of loans in two groups. Under this request, banks continue to maximize revenue as much as possible. Another criterion, equality of opportunity: the true positive rate in the two groups is equal, requiring the bank to have the same proportion of loans to the individuals in the two groups who will repay the loan.

Although these criteria are reasonable from the perspective of requiring static decision-making equity, they mostly ignore these future effects on group outcomes. Figure 6 shows this by comparing the results of strategies that maximize returns, demographic equality, and equal opportunity. Look at the changes in bank earnings and credit scores under each loan strategy. Compared with the maximization of the income strategy, demographic equality and equal opportunity reduce the bank's income, but have you obtained the result of the blue group compared to the maximization of the income? Although the maximizing income strategy is too low for the blue group loan compared to the altruistic optimality, the opportunity equalization strategy (compared to the altruistic optimal) has too much loan, and the demographic equality is excessive, and the relative damage area is reached. .

6. Simulation of loan decisions under constraints

If the goal of the fairness criterion is to “improve or equitize the happiness of all groups in the long runâ€, what has just been shown suggests that in some scenarios, the fairness criterion actually violates this purpose. In other words, fairness constraints will further reduce existing benefits in vulnerable groups. Establishing an accurate model to predict the impact of the strategy on the outcome of the group may mitigate the unexpected damage caused by the introduction of fairness constraints.

Thoughts on the results of "fair" machine learning

The researchers proposed a perspective on the "fairness" of machine learning based on long-term results. Without a detailed model of the delayed results, it is not possible to predict the impact of the fairness criteria as a result of the addition to the classification system. However, if there is an accurate result model, the positive results can be optimized in a more direct way than the existing fairness criteria. Specifically, the resulting curve gives a way to deviate from the maximization of the yield strategy to maximize the outcome.

The resulting model is a concrete method of introducing domain knowledge into the classification process and is in good agreement with many studies that point to "fair" background-sensitive features in machine learning. The resulting curve provides an interpretable visual tool for this application-specific trade-off process.

Please read the original article for more details. This article will appear at the 35th ICML conference this year. This study is only a preliminary exploration of the "result model can alleviate the unexpected effects of machine learning algorithms on society." Researchers believe that in the future, as machine learning algorithms will affect more people's lives, there will be more research work to ensure the long-term fairness of these algorithms.

Paper: Delayed Impact of Fair Machine Learning

Thesis address: https://arxiv.org/pdf/1803.04383.pdf

Mario Pcb Board,Mario Bros Pcb Board,Super Mario Bros Pcb Board,Mario Pcb Motherboard

Guangzhou Ruihong Electronic Technology CO.,Ltd , https://www.callegame.com